When AI reaches its limits in asset production: Why standard tools are not enough and how your AI creation can become scalable

Many communications and marketing teams are using generative AI in marketing—with quick "aha" moments. Image generators such as Google's Nano Banana, OpenAI's ChatGPT Image, and moving image models such as OpenAI's Sora or Google Veo 3 are on everyone's lips. A hero motif, a mood clip, a few social variants, and the prototype is ready. But then production often comes to a standstill. Not because there is a lack of ideas, but because implementation in real brand work collides with real requirements: confidential product information cannot be stored in the cloud, content policies block legitimate motifs, and "a good prompt" is not yet a repeatable process.

The difference between "trying out AI" and "AI delivering value" is:

- Control (brand safety, rights, approvals)

- Consistency (look and feel across different variants, scenes, and time periods)

- Production capability (reproducibility and quality management)

The three biggest AI stumbling blocks for marketing assets

1. Content policies often affect the wrong people

An underwear manufacturer wants to create subtle, high-quality motifs, but is blocked from the platform for showing too much naked skin, even though everything remains within the bounds of regular product communication. A hospital is planning an image film and needs striking medical scenes—also blocked. Platforms such as Google or OpenAI must prevent abuse, but standard tools do not provide satisfactory context differentiation.

2. Data protection and confidentiality conflict with cloud workflows

Especially with prototypes (for example, new machines or upcoming product lines in the electrical appliance segment), uploads to external services are often taboo: IP risk, NDAs, and internal governance are the reasons for this.

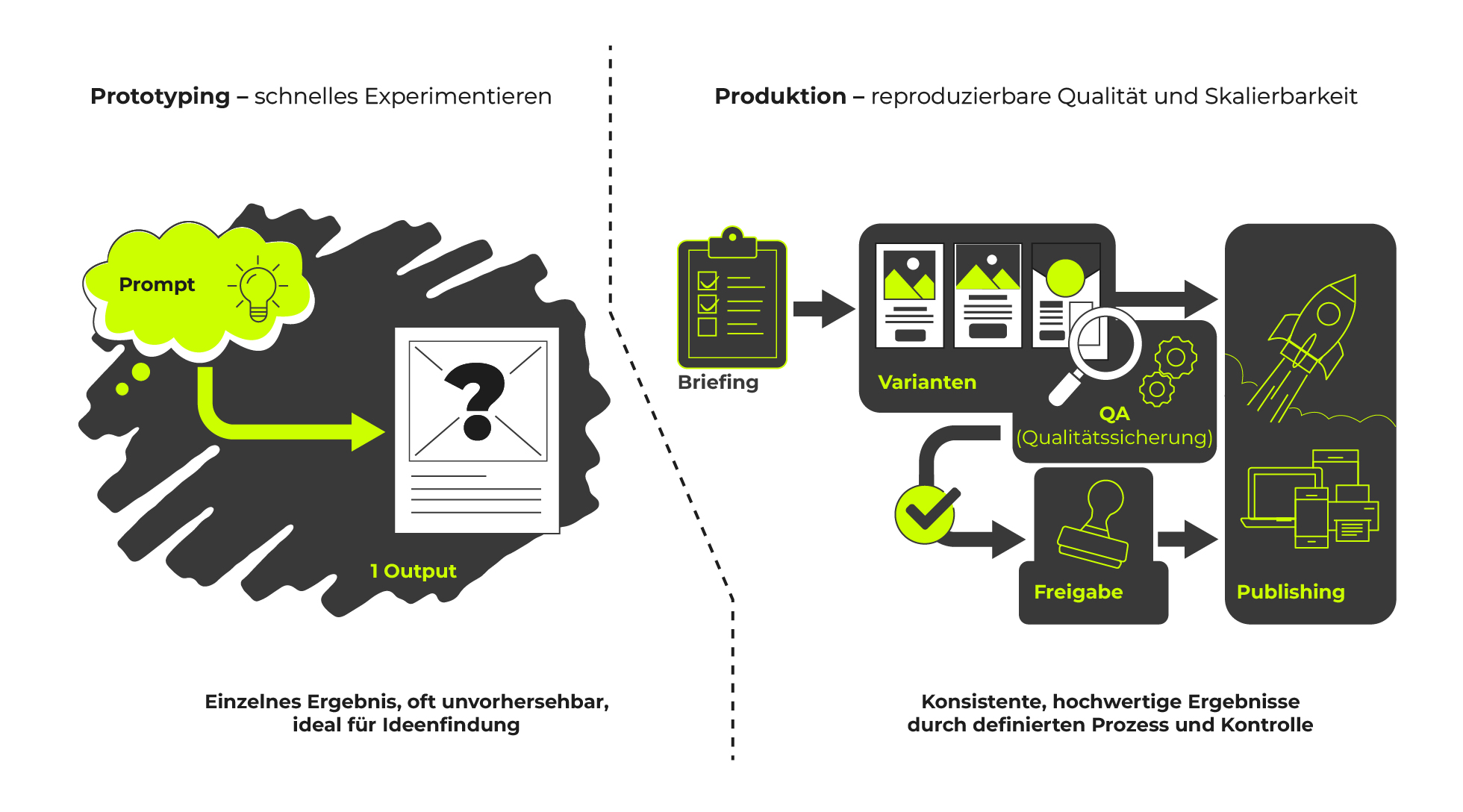

3. Reproducibility: Prompts alone are not a process

In prototyping, a single prompt often delivers exactly the desired result. In production, however, this result is difficult to reproduce or scale. Consistent quality, consistent imagery, and clear parameters: not a chance. Instead of reliability, there are deviations, iteration loops, and inconsistent assets. Without defined workflows, AI remains a fluke rather than a robust production tool. Proliferation of tools and dependence on external roadmaps exacerbate the problem.

Models change, features disappear, cost structures shift. Anyone embedding AI into ongoing processes needs predictability: versioning, documented setups, and controlled updates.

Local AI models as enablers – with clear governance

Local AI models provide a remedy here: they offer more control over data, setups, and availability. Typical "hidden champions" in the stack include Stable Diffusion, Flux AI, Qwen Image, and Z-Image. The model name is less important than the orchestration: This includes targeted selection, finely tuned workflows, and operation in controlled environments, such as in your own or rented data center or on a local computer, isolated from the internet.

Important: "Local" does not mean "unregulated." Only with your own guidelines (rights, consent, brand safety) does AI become scalable and responsible.

This is our typical approach

Recording → Workshop → Concept → Implementation → Iterative optimization

Whether your team manages the pipeline itself later on or we provide ongoing support: both options are possible.

In the workshop, we focus specifically on decision-making rather than tool discussions: you will receive clear use case prioritization, a recommendation for an operating model and the appropriate environment, as well as a concrete roadmap for how to get from individual outputs to variant production – including quality assurance, roles, and process points.

Especially in localized brand communication, "one size fits all" is outdated—today, it's more like "one fits none." Those who set up asset diversity cleanly gain speed, ensure consistency, and create scalability.

Data Scientists

Interested?

In this workshop, we will show you how to turn AI experiments into reliable asset production.

.svg)